ChatGPT Is Not an Oracle

The other day, I overheard someone who is highly ranked in their professional career saying: “I didn’t know how to answer that question because I didn’t know what they meant by that term in that context, so I asked ChatGPT for clarification.” Another day, my friend told me he wanted to change his dog’s diet because he is getting old. He was planning to ask ChatGPT to calculate the proportions for the diet. He asked me what I thought about it. I didn’t even have time to reply as he looked at my worried expression and continued, “Yes, then I can ask Claude or Deepseek to compare answers and estimate the accuracy.” At that point, my worried face grew even more expressive, turning almost into despair.

These are only a few examples of what I’ve heard or experienced in recent weeks: an expert relying on generated answers as the source of truth and a friend comparing different AI systems as an estimate for the accuracy of the generated information. Instinctively, my reaction is, “Absolutely not — please stop doing that now.”

Then, my researcher reaction takes over and asks: Where are the papers that prove this is very problematic? And indeed, there’s plenty of them. There’s extensive research showing the problematic behavior of large language models (LLMs), the same language models that power chatbot interfaces such as ChatGPT, Claude and Deepseek.

Mistakes and Complacency

For example, article citations generated by LLMs are often hallucinated or misplaced; summaries produced through retrieval-augmented generation (RAG) web searches may not accurately reflect the content of the original webpages; factual information can be fabricated; low-frequency knowledge in the training data is difficult for LLMs to learn; and LLMs exhibit sycophancy by agreeing with users even when the response is incorrect. This list of limitations is not exhaustive and continues to grow.

Only a very small portion of the training data is considered “high-quality”

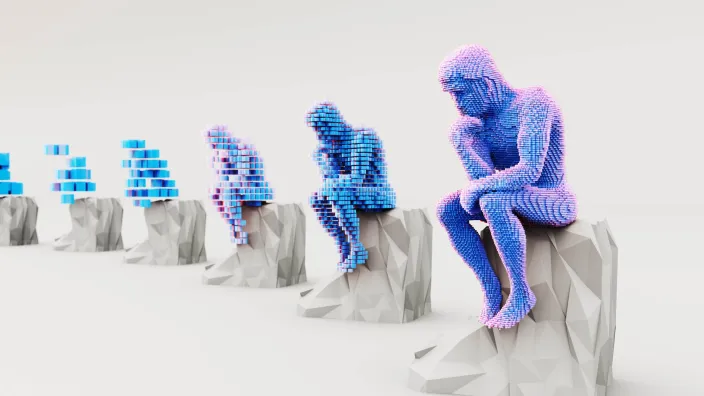

The so-called LLMs have mostly been trained with documents (webpages) crawled from the internet. The information that they learn is the information that is present in these webpages. The great majority of them actually come from blog posts and forums which have never been checked for accuracy or truthfulness before. Only a very small portion of the training data is considered “high-quality” as they come, for example, from accredited news outlets, Wikipedia pages, books and peer-reviewed articles. Moreover, even if LLMs are trained by different organizations, because these models are so greedy for data, their creators use as much data available from the internet as possible (filtered for harmful content). Consequently, LLMs created by different organizations end up having similar biases and respond to user queries in similar ways, as shown by previous studies. Going back to the story about my friend with the dog, this is enough evidence that if one model is wrong, another one will most likely be wrong as well — unless it has not been post-aligned with the correct information, which we have no way of knowing since most LLM chat interfaces available online such as ChatGPT and Claude are proprietary, so their training is unknown to us.

The Limits of Chatbots

My point is not to stop using ChatGPT and other chat interfaces — they are language technologies that are here to stay, to help us be more creative, productive and efficient. However, there are two main differences between the more traditional sources and LLM-powered chatbots. First, these tools digest and summarize information for us at a much higher degree than books, increasing their appeal because of their convenience and efficiency. After all, we humans like to make shortcuts to lower our cognitive load. Secondly, every interaction with LLMs is new and the same question can be answered in different ways if asked even with the same prompt (you can test it yourself). This means that the answers provided by these technologies have not been peer-reviewed or checked, nor have they undergone any editorial process or decision.

Risky Brainstorming

That said, my main point is that we must be even more critical with these tools than when consuming information from traditional media. In practice, this means verifying generated content against authoritative sources rather than taking it at face value. This can be done in different ways depending on the task. Tasks such as paraphrasing or translating text are generally low-risk because the tool just transforms information we’ve already written, though the output should still be reviewed for potential misunderstandings.

Asking LLMs to brainstorm ideas, explain concepts or retrieve facts are considered high-risk tasks

On the other hand, asking LLMs to brainstorm ideas, explain concepts or retrieve facts are considered high-risk tasks, as the resulting information may contain errors and misinformation due to hallucinations or simply because the LLM has learned incorrect information. Therefore, the truthfulness of the generated answers in high-risk tasks should be evaluated carefully and confirmed through other authoritative sources. I am aware that the process takes longer… but is reaching a post-truth reality a fair trade-off for being more “efficient”?