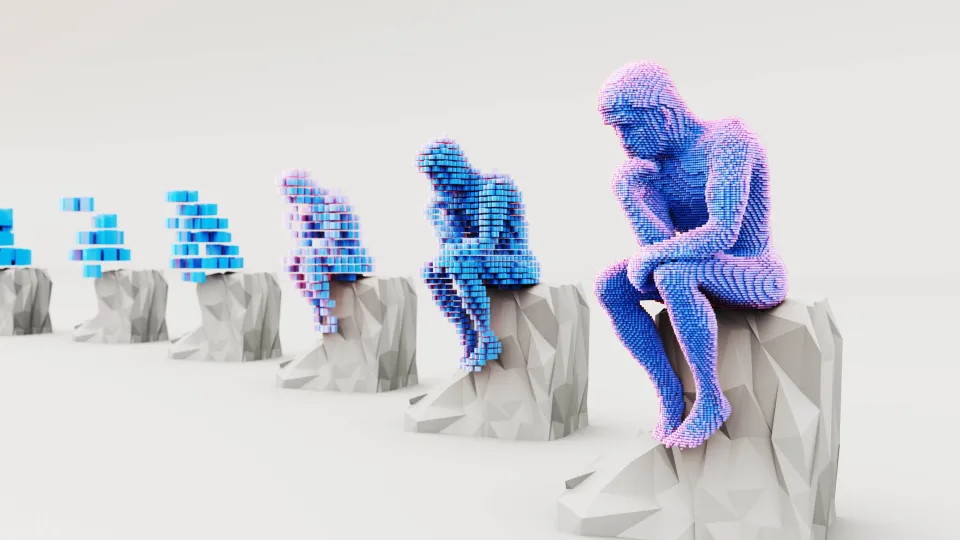

Thinking Through Machines

At the start of a typical workday, writing tasks now take a different form. Emails are drafted faster. Report outlines take shape in a few steps. Notes from meetings are shorter and easier to share. Somewhere in the background, a large language model has assisted the process — not necessarily as a visible tool, but as a silent collaborator. Increasingly, we do not just work with these systems. We work through them.

How LLMs Work

Large Language Models, or LLMs, are a class of artificial intelligence systems designed to generate and process human language. They belong to the broader category of generative AI, technologies that can produce new content rather than simply classify or retrieve existing information. Their development builds on decades of research in Natural Language Processing, a field that has evolved from rule-based systems to statistical models and, more recently, to data-driven neural architectures trained at scale. Large language models are trained on large collections of text, such as books, articles, websites and documents. During training, they learn to predict which word is likely to come next in a sequence. Through this process, they acquire a statistical representation of language, including common patterns, structures and associations. This allows them to generate fluent text, but it does not fully explain why recent systems are easy to use in everyday settings.

Human Feedback

What moved large language models beyond earlier approaches was the use of human feedback. After the main training phase, people evaluate model outputs and indicate which answers work better. The model learns to repeat these preferred behaviors. As a result, recent LLMs are better at following instructions, responding to questions and interacting in a conversational way.

Because recent LLMs are easy to use and widely accessible, they are now integrated into many everyday language activities, such as writing, reading, summarizing, planning and studying. Their repeated use changes how cognitive work is carried out. Instead of starting from a blank page, people increasingly work through outlines, drafts and revisions suggested by the system. In this way, LLMs not only speed up tasks, they reshape how ideas are formed, organized and refined.

The Benefits

Recent studies provide early evidence of these effects. A study by MIT researchers on professional writing tasks shows that access to generative AI can increase productivity and improve output quality, especially for white-collar workers. The same study also finds a reduction in performance gaps, suggesting that language models may help less experienced workers reach higher baseline standards. In educational settings, a recent study published in a Nature journal reports that generative AI can support learning activities, while also reshaping how students approach writing, revision and feedback.

The Issues

Yet these benefits come with clear issues: generative AI is likely to affect people in different ways. An interdisciplinary study published in PNAS Nexus shows that while generative AI can boost productivity, its benefits and risks are unevenly distributed across workers and sectors. Differences in roles, skills and access to technology shape who gains the most from AI-assisted work. This unevenness is also visible in adoption patterns. A large empirical study on the use of generative AI in Italy finds widespread adoption across everyday work and information tasks, alongside digital divides linked to uneven AI literacy. These divides intersect with existing inequalities, including gender. The differences matter because language models do not simply assist individuals in isolation. They shape shared ways of writing, explaining and organizing information. As their use spreads, the language they produce becomes more visible, more reusable and more influential. When language generation is automated at scale, the main risk is not only unequal access, but also increasing uniformity. LLMs tend to reproduce dominant linguistic norms, privileging widely represented styles, topics and perspectives. Minority languages, unconventional expressions and culturally specific forms of knowledge are therefore more likely to be marginalized or lost.

The Challenge of Governing Models

As language increasingly functions as infrastructure, its governance becomes a collective concern. The challenge ahead is not whether we will use these models, but how we choose to shape them. The transition from a society that talks to machines to one that thinks through them has already begun. Understanding its implications is a first step toward steering this transformation responsibly.