More Math for a Smarter AI

Large language model–based AI systems have made extraordinary progress in recent years. They are exceptionally good at recognizing patterns, extracting structure from data and interpreting natural language. However, many real-world problems are not just about recognizing patterns; they are about making decisions under constraints, trade-offs and scarce resources. In these settings, plausibility is not enough: decisions must be feasible, reliable and often provably optimal.

Mathematical Optimization as a Basis for Reliable Decisions

Mathematical optimization methods, on the other hand, are explicitly designed for this purpose. Linear and integer programming models, for example, provide an effective way to formulate real-world decision problems in mathematical terms. Once this is done, mathematical solvers can compute solutions with provable guarantees. Mathematical programming is therefore a cornerstone of decision-making in industry. Solvers based on these tools are widely used in logistics, energy systems, manufacturing, finance and technology to compute reliable optimal solutions. However, there is a technical bottleneck that often limits the broader adoption of mathematical optimization technologies.

The Bottleneck of Modeling

The challenge lies in the modeling step, that is, formulating complex decision problems in the correct mathematical language so that optimization solvers can process them. More concretely, optimization solvers take as input a set of mathematical functions and constraints with a precise structure and compute optimal solutions. The user must first translate a real decision problem into this formal language. Recognizing which real-world problems can be expressed with this mathematical structure, and how to do so correctly, requires substantial expertise, mathematical insight and a deep understanding of both the application domain and the underlying tools.

LLMs & Optimization: The New Frontier of Decision-Making AI

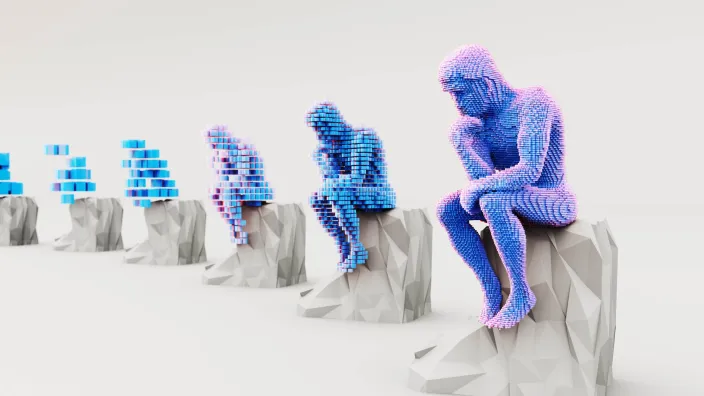

This is where the landscape is now changing. Over the past few years, LLMs have become significantly better at interpreting problem descriptions and translating them into structured mathematical formulations. Just a few years ago, LLMs could assist with intuition or restate problems informally, but they struggled to produce correct, ready-to-use formulations for complex decision problems. Today, they can propose decision variables, objectives and constraints that are essentially solver-ready, refining formulations through interaction with human experts. When directly interacting with solvers, they can even identify and correct errors in their own formulations. Importantly, LLMs are not replacing mathematical optimization; they are learning how to use it.

This combination marks the next step in AI for real decision-making. LLMs contribute modeling, abstraction and interpretation, while optimization solvers contribute rigor, guarantees and reliability. The resulting AI agents do not merely act; they act optimally or near-optimally, with explainable and verifiable decision processes.

The lesson extends naturally to AI agents more broadly. True agency does not come from pattern recognition alone, nor from heuristic decision-making without guarantees. It comes from the ability to translate goals into precise structured formulations and solve them using rigorous methods. LLMs are rapidly improving at the first step. Mathematical optimization continues to provide powerful tools for the second.

The future of reliable AI agents for decision-making lies in their combination. More math does not constrain AI; it empowers it.